-

Writing code by hand is dead

The landscape of software engineering is changing. Rapidly. As my colleague Ben likes to say, we will probably stop writing code by hand within the next year.

This comes with a move toward orchestration, and a fundamental change in how we engage with our craft. Many of us became coders first, software engineers second. There’s a lot more to software engineering than coding, but coding is our first love.

Coding is comfortable, coding is fun, coding is safe. For many of us, the actual writing of syntax was never the bottleneck anyway. But now, you can command swarms of agents to do your bidding (until the compute budget runs out, at least, and we collectively decide that maybe junior engineers aren’t a terrible investment after all).

The day-to-day reality of the job is shifting. Instead of writing greenfield code or getting into the flow state to debug a complex problem, you’re now multitasking. You’re switching between multiple long-running tasks, directing AI agents, and explaining to these eager little toddlers that their assumptions are wrong, their contexts are overflowing, or they need to pivot and do X, Y, and Z.

And that requires endless context switching. Humans cannot truly multitask; our brains just rapidly jump context across multiple threads. Inevitably, some of that context gets lost. It’s cognitively exhausting, but it feels hyper-productive because instead of doing one thing, you’re doing three—even if the organizational overhead means it actually takes four times as long to get them all over the finish line.

This is, historically, what staff software engineers do. They don’t particularly write much code. They juggle organizational bits and pieces, align architecture, and have engineers orbiting around them executing on the vision. It’s a fine job, and highly impactful, but it’s a fundamentally different job. It requires a different set of skills, and it yields a different type of enjoyment. It’s like people management, but without the fun part: the people.

As an industry, we’re trading these intimate puzzles for large scale system architecture. Individual developer can now build at the sacle of a whole product team. But scaling up our levels of abstraction always leaves something visceral behind.

It was Ben who first pointed out to me that many of us will grieve writing code by hand, and he’s absolutely right. We will miss the quiet satisfaction of solving an isolated problem ourselves, rather than herding fleets of stochastic machines. We’ll adjust, of course. The field will evolve, the friction will decrease, and the sheer scale of what we can create will ultimately make the trade-off worth it.

But the shape of our daily work has permanently changed, and it’s okay to grieve the loss of our first love. Consider this post your permission to do so.

-

AI, Vim, And the illusion of flow

I’ve been using AI in my job a lot more lately — and it’s becoming an explicit expectation across the industry. Write more code, deliver more features, ship faster. You know what this makes me think about? Vim.

I’ll explain myself, don’t worry.

I like Vim. Enough to write a book about the editor, and enough to use Vim to write this article. I’m sure you’ve encountered colleagues who swear by their Vim or Emacs setups, or you might be one yourself.

Here’s the thing most people get wrong about Vim: it isn’t about speed. It doesn’t necessarily make you faster (although it can), but what it does is keep you in the flow. It makes text editing easier — it’s nice not having to hunt down the mouse or hold an arrow key for exactly three and a half seconds. You can just delete a sentence. Or replace text inside the parentheses, or maybe swap parentheses for quotes. You’re editing without interruption, and it gives your brain space to focus on the task at hand.

AI tools look this way on the surface. They promise the same thing Vim delivers: less friction, more flow, your brain freed up to think about the hard stuff. And sometimes they actually deliver on that promise! I’ve had sessions where an AI assistant helped me skip past the tedious scaffolding and jump straight into the interesting architectural problem. There’s lots of good here.

Well, I think the difference between AI and Vim explains a lot of the discomfort engineers are feeling right now.

The depth problem

When I use Vim, the output is mine. Every keystroke, every motion, every edit — it’s a direct translation of my intent. Vim is a transparent tool: it does exactly what I tell it to do, nothing more. The skill floor and ceiling are high, but the relationship is honest. I learn a new motion, I understand what it does, and I can predict its behavior forever. There’s no hallucination.

ci)will always change text inside parentheses. It won’t sometimes change the whole paragraph because it misunderstood the context.AI tools have a different relationship with their operator. The output looks like yours, reads like yours, and certainly looks more polished than what you would produce on a first pass. But it isn’t a direct translation of your intent. Sometimes it’s a fine approximation. Sometimes it’s subtly wrong in ways you won’t catch until a hidden bug hits production.

This is what I’d call the depth problem. When I use Vim, nobody can tell from reading my code whether I wrote it in Vim, VS Code, or Notepad. The tool is invisible in the artifact. And that’s fine, great even - because the quality of the output still depends entirely on me. My understanding of the problem, my experience with the codebase, my judgment about edge cases, my ability to produce elegant code - all of that shows up in the final product, regardless of which editor I used to type it up.

AI inverts this. The tool is extremely visible in the artifact - it shapes the output’s style, structure, and polish - but the operator’s skill level becomes invisible. Everything comes out looking equally competent. You can’t tell from a pull request whether the author spent thirty minutes carefully steering the AI through edge cases or just hit accept on the first suggestion.

That’s a huge problem, really. Because before, a bad pull request was easy to spot. Oftentimes a junior engineer would give you “hints” by not following the style guides or established conventions, which eventually tips you off and leads you to discover a major bug or missed corner case.

Well, AI output always looks polished. We lost a key indicator which makes engineering spidey sense tingle. Now every line of code, every pull request is a suspect. And that’s exhausting.

Looking done versus being right

I just read Ivan Turkovic’s excellent AI Made Writing Code Easier. It Made Being an Engineer Harder (thanks for the share-out, Ben), and I couldn’t agree more with his core observation. The gap between “looking done” and “being right” is growing, and it’s growing fast.

You know what’s annoying? When your PM can prototype something in an afternoon and expects you to get that prototype “the rest of the way done” by Friday. Or the same day, if they’re feeling particularly optimistic about what “the rest of the way” means (my PMs are wonderful and thankfully don’t do this).

But either way I don’t blame them, honestly. The prototype looks great. It’s got real-ish data, it handles the happy path, and it even has a loading spinner. It looks like a product. And if I could build this in two hours with an AI tool - well, how hard could it be for a full-time engineer to finish it up?

The answer, of course, is that the last 10% of the work is 90% of the effort. Edge cases, error handling, validation, accessibility, security, performance under load, integration with existing systems, observability - none of that is visible in a prototype, and AI tools are exceptionally good at producing work that doesn’t have any of it. The prototype isn’t 90% done. It 90% looks good.

Of course there’s an education component here - understanding the difference between surface level polish and structural soundness. But there’s a deeper problem here too, and it’s hard to solve with education alone.

The empathy gap

My friend and colleague Sarah put this better than I could: we’re going to need lessons in empathy.

Here’s what she means. When a PM can spin up a working prototype in an afternoon using AI, they start to believe - even subconsciously - that they understand what engineering involves. When an engineer uses AI to generate user-facing documentation, they start to think the tech writer’s job is trivial. When a designer uses AI to write frontend code, they wonder why the team needs a dedicated frontend engineer.

And none of these people are wrong about what they experienced. The PM really did build a working prototype. The engineer really did produce passable documentation. But the conclusion that they “did the other person’s job” and the job is therefore easy - is completely wrong.

Speaking of Sarah. Sarah is a staff user experience researcher. It’s Doctor Sarah, actually. And I had the opportunity to contribute on a research paper, and I used AI to structure my contributions, and I was oh-so-proud of the work because it looked exactly like what I’ve seen in countless research papers I’ve read over the years. And Sarah scanned through my contributions, and was real proud of me. Until she sat down to read what I wrote, and had to rewrite just about everything I “contributed” from scratch.

AI gives everyone a surface-level ability to contribute across almost any domain or role. And surface-level ability is the most dangerous kind, because it comes with surface-level understanding and full-depth confidence. Modern knowledge jobs are often understood by their output. Tech writers by the documents produced, designers by the mocks, and software engineers by code. But none of those artifacts are core skills of each role. Tech writers are really good at breaking down complex concepts in ways majority of people can understand and internalize. Designers build intuition and understanding of how people behave and engage with all kinds of stuff. Software engineers solve problems. AI tools can’t do those things.

The path forward isn’t to gatekeep or to dismiss AI-generated contributions. It’s to build organizational empathy - a genuine understanding that every discipline has depth that isn’t visible from the outside, and that a tool which lets you produce artifacts in another person’s domain doesn’t mean you understand that domain.

This is, admittedly, not a new problem. Engineers have underestimated designers since the dawn of software. PMs have underestimated engineers for just as long. But AI is pouring fuel on this particular fire by making everyone feel like a competent generalist.

So what do we actually do?

I don’t want to be the person writing yet another “AI is ruining everything” essay. Frankly, there are enough of those. AI tools are genuinely useful - I use them daily, they make certain kinds of work better, and they’re here to stay. The scaffolding, the boilerplate, the “I know exactly what this should look like but I don’t want to type it out” moments - AI is great for those. Just like Vim is great for the “I need to restructure this method” moments.

A few things I think help, borrowing from Turkovic’s recommendations and adding some of my own:

Draw clear boundaries around AI output. A prototype is a prototype, not a product. AI-generated code is a first draft, not a pull request. Making this explicit - in team norms, in review processes, in how we talk about work - helps close the gap between appearance and reality.

Invest in education, not just adoption. Rolling out AI tools without teaching people how to evaluate their output is like handing someone Vim without explaining modes. They’ll produce something, sure, but they won’t understand what they produced. And unlike Vim, where the failure mode is

jjjjjjkkkkkk:help!in your file, the failure mode with AI is shipping code that looks correct and isn’t.Build empathy across disciplines. This is Sarah’s point, and I think it’s the most important one. If AI makes it easy for anyone to produce surface-level work in any domain, then we need to get much better at respecting the depth beneath the surface. That means engineers sitting with PMs to understand their constraints, PMs shadowing engineers through the painful parts of productionization, and everyone acknowledging that “I made a thing with AI” is the beginning of a conversation, not the end of one.

Protect your flow. This is the Vim lesson. The best tools are the ones that serve your intent without distorting it. If an AI tool is helping you think more clearly about the problem, great. If it’s generating so much output that your job has shifted from “solving problems” to “reviewing AI’s work” - that’s not flow. That’s a different job, and it might not be the one you signed up for.

I keep coming back to this: Vim is a good tool because it does what I mean. The gap between my intent and the output is zero. AI tools are useful, sometimes very useful, but that gap is never zero. Knowing when the gap matters and when it doesn’t - that’s a core skill for where we are today.

P.S. Did this piece need a Vim throughline? No it didn’t. But I enjoyed shoehorning it in regardless. I hear that’s going around lately.

All opinions expressed here are my own. I don’t speak for Google.

-

Are AI productivity gains fueled by delivery pressure?

A multitudes study which followed 500 developers found an interesting soundbyte: “Engineers merged 27% more PRs with AI - but did 20% more out-of-hours commits”.

While I won’t comment on the situation at Google, there are many anecdotes online about folks online who raise concerns about increased work pressure. When a response to “I’m overloaded” becomes “use AI” - we’re heading for unsustainable workloads.

The problem is compounded by the fact that AI tools excel at prototyping - the type of work which makes other work happen. Now, your product manager can prototype an idea in a couple of hours, fill it with real (but often incorrect) data, sell the idea to stakeholders, and set goals to productionize it a week later.

“Look - the prototype works, and it even uses real data. If I could do this in a couple of hours, how hard could this be for an experienced engineer?” - while I haven’t heard these exact words, the sentiment is widespread (again, online).

In a world where AI provides a surface-level ability to contribute across almost any role, the path to avoiding global burnout is to focus on building empathy. Just because an LLM can churn out a document doesn’t mean it’s actually good writing, and we’re certainly not at the point where a handful of agents can replace a seasoned PM. However, because the output looks polished - especially to those without deep domain knowledge - it’s easy to fall into the trap of thinking you’ve done someone else’s job for them.

That gap between “looking done” and “being right” is exactly where the extra professional pressure begins to mount. This is really caused by the way we still measure knowledge worker productivity - by the sheer number of artifacts they produce, rather than the outcomes of the work.

The right way to leverage AI in workspace is as a license to work better and focus on the right things, not as a mandate to produce more things faster.

-

Homepage for a home server

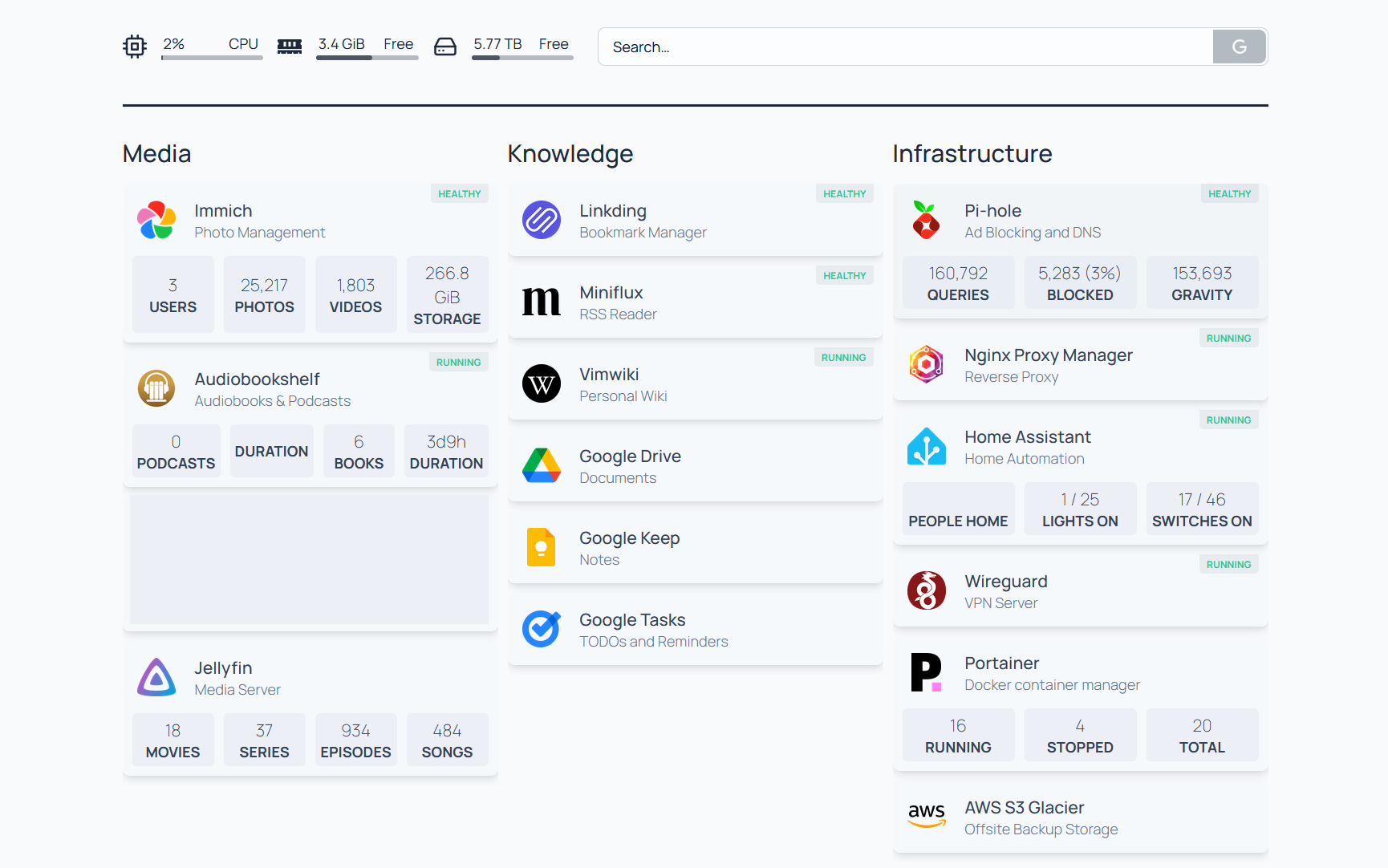

I have a NAS (Network Accessible Storage) which doubles as a home server. It’s really convenient to have a set of always-on, locally hosted services - which lets me read RSS feeds without distractions, have local photos storage solution, or move away from streaming services towards organizing my (legally owned) collections of movies, shows, and audiobooks.

At this point I have 20-or-so services running and sometimes it gets hard to keep track of what’s where. For that - I found Homepage. A simple, fast, lightweight page which connects to all of my services for some basic monitoring, and reminds me what my service layout looks like.

Here’s what I built:

I love that the configuration lives in YAML files, and because the page is static - it loads real fast. There are many widgets which provide info about various services out of the box. It’s neat.

There’s definitely a question of how much I’ll keep this up-to-date: it’s not an automatically populated dashboard, and editing is a two-step process (SSH into the machine, edit the YAML configs) - which adds some friction. We’ll have to wait and see, but for now I’m excited about my little dashboard.

-

What I won't write about

Howdy.

I’ve been writing a lot more over the past year - in fact, I’ve written at least once a week, and this is article number 60 within the past year. I did this for many reasons: to get better at writing, to get out of a creative rut, play around with different writing voices, but also because I wanted to move my blog from a dry tech blog to something I myself am a little more excited about.

I started this blog in 2012, documenting my experiences with various programming tools and coding languages. I felt like I contributed by sharing tutorials, and having some public technical artifacts helped during job searches.

Over the years I branched out - short reviews for books I’ve read, recounts of my travel (and turning my Prius into a car camper to do so), notes on personal finance… All of this shares a theme: descriptive writing.

I feel most confident describing and recounting events and putting together tutorials. It’s easy to verify if I’m wrong - an event either happened or didn’t, the tool either worked - or didn’t. And I was there the whole time. That kind of writing doesn’t take much soul and grit, and while it’s pretty good at drawing traffic to the site (eh, which is something I don’t particularly care about anymore), I wouldn’t call it particularly fulfilling. Creatively, at least.

I’m scared to share opinions, because opinions vary and don’t have ground truth. It’s easier to be completely wrong, or to look like a fool. I don’t want to be criticised for my writing. Privacy is a matter too - despite writing publicly, I consider myself to be a private person.

So, after 13 years of descriptive writing, I made an effort to experiment in 2025. I wrote down some notes on parenthood, my thoughts on AI and Warhammer, nostalgia, identity, ego… I wrote about writing, too.

It’s been a scary transition, and it still is. I have to fight myself to avoid putting together yet another tutorial or an observation on modal interfaces. I’ve been somewhat successful though, as I even wrote a piece on my anxiety about sharing opinions.

But descriptive writing continues sneaking in, trying to reclaim the field.

You see, I write under my own name. I like the authenticity this affords me, and it’s nice not having to make a secret blog (which I will eventually accidentally leak, knowing my forgetfulness). I mean this blog has been running for 14 years now, that’s gotta count for something.

But writing under my own name also presents a major problem. It’s my real name. If you search for “Ruslan Osipov”, my site’s at the top. I don’t hide who I am, and you can quickly confirm my identity by going to my about page. This means that friends, colleagues, neighbors, bosses, government officials - anyone - can easily find my writing. If there are people out there who don’t like me - for whatever reason - they can read my stuff too.

The more I write, the more I learn that good writing is 1) passionate and 2) vulnerable (it’s also well structured, but I have no intention of restructuring this essay - so you’ll just have to sit with my fragmented train of thought).

It’s easy to write about things I’m passionate about. I get passionate about everything I get involved in - from parenting and housework to my work. I write this article in Vim, and I’m passionate enough about that to write a book on the subject.

Vulnerability is hard. Good writing is raw, it makes the author feel things, and leaves little bits and pieces of the author scattered on the page. You just can’t fake authenticity. But here’s the thing - real life is messy. Babies throw tantrums, work gets stressful, the world changes in the ways you might not like. That isn’t something you want the whole world to know.

Especially if that world involves a prospective employer, for example. So you have to put up a facade, and filter topics that could pose risk. I’m no fool: I’m not going to criticize the company that pays me money. I like getting paid money, it buys food, diapers, and video games.

I still think it’s a bit weird and restrictive that a future recruiter is curating my writing today. The furthest I’m willing to push the envelope here is my essay on corporate jobs and self-worth.

Curation happens to more than the work-related topics of course. And that might even be a good thing. I don’t just reminisce about my upbringing. It’s a brief jumping off point into my obsession with productivity. Curation is just good taste. You’re not getting my darkest, messiest, snottiest remarks. You’re getting a loosely organized, tangentially related set of ideas. Finding that gradient has been exciting.

So, here’s what I won’t write about. I won’t share too many details about our home life. I won’t complain about a bad day at work. I won’t badmouth people.

But I will write about what those things feel like - the tiredness, the frustration, the ego.