Numenera for D&D players

Before COVID-19 shelter-in-place order came to be, I played Dungeons and Dragons on and off for the past 10 or so years. I unfortunately don’t have the patience to be a great player, but I enjoy running the game – being a Game Master constantly engages me, and I love seeing players have fun and overcome obstacles together.

Having to stay inside I had a prime opportunity to try something new, exciting, and less rule heavy than D&D: that’s how I discovered Numenera. Well, I’ve been familiar with the concept for some time: I played Planescape: Torment – with Planescape being an inspiration for the setting of Numenera, and I’ve recently completed Torment: Tides of Numenera – a spiritual successor to Planescape, which used the Cypher System rules… I’m getting ahead of myself, let me back up.

Numenera is a science fantasy tabletop role-playing game set in the (oh so) distant future. The “Cypher System” is a tabletop game engine which Numenera runs on. The system is notorious for emphasizing storytelling over rules, and for usage of “cyphers” - a one time abilities meant to shake up gameplay. Want to teleport? You don’t have to be a level 10 wizard to that. Find the right cypher, and you can teleport, but only once. This keeps gameplay from getting stale, and provides players with exciting abilities from the get-go.

For the past few months I’ve been running Numenera campaign for a group of friends, and we’ve had a blast so far! We’ve been playing over Google Hangouts, without miniatures or services like Roll20: and the game held up well.

It took some time for players to get used to the differences between the systems – and some picked up the new mindset faster than others. Here are the key concepts one had to learn to get used to Numenera. This overview is not complete, but I think it helps.

Numenera, cyphers, and artifacts

Numenera is just a catch-all term for weird devices and phenomenon. Now you don’t have to say “that device and/or contraption” and say “numenera” instead. You’re welcome!

Cyphers are items that give characters fun and unique one-off powers. Cyphers are plentiful, and using them like they’re going out of fashion is the key to having fun in Numenera. It’s called “Cypher System” for this exact reason.

Artifacts are a lot more rare, and can be used multiple times (often requiring a die roll to check if they deplete).

Actions, skills, assets, and effort

Every task in Numenera has a difficulty rated on a scale from 0 to 10. Be it sprinting on the battlefield, picking a lock, convincing a city guard you’re innocent, or hitting that guard on the back of her head with a club. 0 is a routine action, like you sitting right now and reading this, or maybe going over to the fridge to grab a beer. Difficulty 10 is getting the Israeli prime minister to abandon his post, fly over to wherever you live, and serve you that beer. Oh and you’re in charge of Israel now too, your majesty.

Your GM will tell you the difficulty of the task.

The only die players use in Numenera is d20 - the trusty 20-sided die (and that’s already a lie - in a few cases d6 is also used). Multiply difficulty by 3, and that’s the number you’ll have to reach with your d20 roll. Juggling is a difficulty 4 task. This means you’ll have to roll 12 (4 * 3) or higher to succeed.

But what if I’m an experienced juggler you say? I’m glad you asked: you can be either trained or specialized in a skill, reducing the difficulty by 1 and 2 respectively. Juggling for someone who is specialized in juggling is a difficulty 2 (4 - 2) task. You can reduce difficulty by up to 2 levels using skills. There is no definitive list of skills in Numenera, and you can choose to be skilled in anything you want, be it mathematics, dancing, or magic tricks.

Are you juggling well balanced pins? That’s an asset, further reducing the difficulty by 1. You can apply up to 2 levels of assets to reduce task difficulty. Just like with skills, there’s no strict definition of what constitutes an asset. Be creative!

Finally, you can apply effort to a task. Expand effort, and the task becomes more manageable. Effort is limited by how advanced the character is, and starting characters can’t apply more than 1 level of effort.

Hence, a specialized juggler (-2) juggling well balanced pins (-1) and applying effort (-1) reduces task difficulty from level 4 to 0, making it a trivial task – no need to roll to succeed!

Stat pools, edge, and more about effort

Characters have three primary stats - might, speed, and intellect. There’s no health or other resources to track, but your stat pools do deplete. Did you get punched in the face? Deduct damage from your might pool. Exerted effort to concentrate on juggling? Pay the price through your speed pool. Trying to figure out how something works? Reduce your intellect pool.

You use the same resource to exert yourself and to take damage. As your pools deplete, your character becomes debilitated, and once all 3 pools drop to 0 - your character dies. There’s nothing more to it.

The stat pools replenish by resting, and you can rest 4 times a day - for a few seconds, 10 minutes, 1 hour, and 10 hours. When you rest - restore 1d6 + tier (1 for beginning character) points spread across pools of your choice.

Each character also has “edge”. Edge is an affinity in a particular stat, and reduces all resource cost in that pool. If you have edge 2 in might, each time you expand that resource, you reduce the cost by 2. Bashing with your shield costs 1 might? That’s dropped to 0 for you. Moving a tree blocking the road all by yourself will cost 5 might points? That’s now dropped down to 3.

This becomes particularly relevant when applying effort. Applying effort makes tasks easier, but costs points from a stat pool. First level of effort costs 3 points, and any subsequent levels cost 2. Say if you’re trying to climb a tree (difficulty 3) and want to expand 2 levels of effort to make the task easier. That would cost you 5 points from your might pool, and your edge 1 in might drops the cost to 4.

Don’t overthink it for now.

Tiers, experience, and GM intrusions

Every Numenera character has a tier, which is akin to levels in D&D. There are 6 tiers, with each more advanced than the last. However, unlike in Dungeons and Dragons, the tiers are not detrimental to character advancement: characters get just as much if not more utility from cyphers, artifacts, and the way they interact with the world.

Experience is given out by the Game Master for accomplishing narrative milestones. Experience is also given during GM intrusions, Cypher System’s signature narrative mechanic. At any point throughout the session, GM introduces unexpected consequences for player actions: a rope snaps, poisoned villain has an antidote, or a previously hidden enemy attacks a passing player. Each time an intrusion happens, the affected player gets 2 experience points - 1 for themselves, and 1 to give away to another player.

GM intrusions allow to adjust the difficulty of the game and introduce fun challenges on the fly. Many GMs already do this in their games, but the Cypher System awards the players experience when this happens, associating unexpected events with positive stimulus.

In Numenera, experience points are a narrative currency, and a way for players to influence direction of the story. Experience is often counted in single digits, and can be spent in various ways:

- 1 XP: re-roll a die, or resist GM intrusion

- 2 XP: gain a short term benefit, like learn a local dialect, or become trained in a narrowly applicable skill

- 3 XP: gain a long term benefit: make an ally, establish base of operations, or gain an artifact

- 4 XP: increase your pools, learn a skill, gain an edge, increase amount of effort you can expend, get an additional ability, improve pool recovery rolls, or reduce armor penalty

Once you’ve spent 4 XP to advance your character 4 times, you advance to the next tier. All of the above benefits are equally valuable for character progression - as a die re-roll at the right time, or an ally’s help can make all the difference in a tough situation.

A word about combat

You might notice that I still haven’t brought up combat. And that’s because there aren’t a lot of additional rules.

Just like in D&D, there are rounds. Combat initiative is a speed task, and turns are taken with as much structure as the group would desire. There’s often no need for precise turn order outside of who goes first – the players or the enemies.

Attacking is a task against GM-given difficulty. So is defending. Effort can be expanded to make hitting a target easier. Characters can expand effort to get +3 damage on their attacks per level of effort instead.

Distances are measured as immediate (up to 10 ft), short (up to 50 ft), long (up to 100 ft), and very long. Melee attacks happen within an immediate distance, traveling short distance takes an action, and traveling long distance within a round is a level 4 athletics task.

Combat-related tasks are only limited by imagination. You can distract your enemies, assist your comrades, or swing into battle on a rope yelling “Huzzah”!

Special rolls - natural 1, 19, and 20

To keep dice rolls exciting, Numenera extends the idea of critical success and critical failure.

Natural 1 allows for a free GM intrusion. Something unexpected happens, and you don’t get any experience for it.

Natural 17 and 18 provide +1 and +2 damage respectively when attacking.

Natural 19 gives the player an opportunity to come up with a minor effect (or inflict +3 damage): some minor advantage which usually persists for a round. Maybe an enemy is knocked back, or a merchant impressed by your bartering skills gives you an extra discount.

Natural 20 provides the player with a major effect of their choice (or up the damage to +4). The cutthroats in a bar spill the location of their next heist, or a enemy gets stunned in battle.

Stories, rules, and common sense

Finally, Numenera and Cypher System are focused on telling great stories, and many rules are deliberately vague and are left up to GM’s discretion. In Numenera, if you wonder if you can attempt some task – you most definitely can try, and good GM will help you fail in spectacular and most importantly entertaining ways.

Adjusting to working from home

Like many, I moved to working from home during the COVID-19 pandemic. Parts of California enacted shelter-in-place order back in March, and it’s been over a month and a half since then. I briefly worked from home back in 2013 as a freelancer - and I really got the whole work/life balance thing wrong. So this time around I’ve decided to approach remote work with a plan.

My day begins around 7 or 8 am, without too much deviation from schedule. I used to bike to work before the pandemic, and I try to head out for a 30 minute ride in a morning a few days a week. There aren’t a lot of people out early, and I love starting my day with some light cardio.

I share breakfast and coffee with my partner, often while catching up on our favorite morning TV show. At the moment I’m being educated on Avatar: The Last Airbender. Ugh, Azula!

Breakfast is followed by a calendar sync. We check if either of us have overlapping or sensitive meetings. That way we know which calls either of us needs to take in another room - and which are okay to have in our workspace. Both of our desks are in the living room, and we use noise cancelling headphones throughout the day to help with focus. During the day we convert our bedroom to an ad hoc conference room.

By 9 am, I have my desk set up: I replace my personal laptop with its corporate-issued counterpart. An external webcam helps with the image quality, and a dedicated display, keyboard (Vortex Pok3r with Matt3o Nerd DSA key cap set), and a mouse (Glorious Model O-) alleviate the cramped feeling I get when using a laptop.

Most importantly, I’m showered, groomed, and dressed by this time. While working in whatever I slept in has worked well for occasional remote Fridays, it proved to be unsustainable for prolonged remote work. Whenever I wasn’t dressed for work, I found myself slowly drifting towards the couch, and trading a laptop for my phone. In fact, some days I dress up even more than I used to when going to the office!

This is where the clear separation between home and work is established. I’m fully dressed and have my workstation set up: it’s work time!

I spend the next few hours busy with heads down work, usually working on a design, writing some code, or doing anything which requires concentration. Playing something like a Rainy Cafe in the background helps me stay in the zone.

Back in the office, 11 am used to be my workout time: a gym buddy of mine would consistency exercise at 11 am, and I adopted the habit of joining him over the past few years. I decided not to move the time slot: at 11 am I change into my workout clothes and exercise: 30 to 45 minutes of bodyweight exercises or online classes use up the remainder of my morning willpower. I’m so glad there are thousands of YouTube videos to keep me company!

My partner and I alternate cooking, and the next hour or so is reserved for cooking, lunch together, and cleanup. Remember the noise cancelling headphones? We haven’t heard (and often seen) each other since morning! Getting to share lunch daily has definitely been the highlight of staying at home for me.

After that - back to work: design reviews, meetings, busywork.

I wrap up around 5 pm, and make a point not to work past that. I swap out my work laptop for my own (even if I’m not planning to use it), and stow it away for the night. Disassembling my setup paired with showering and changing into house clothes creates a solid dividing line between work and home.

Cooking dinner together and evening activities follow, but that’s a story for another time. Stay healthy and productive!

How I use Vimwiki

I’ve been using Vimwiki for 5 years, on and off. There’s a multi year gap in between, some entries are back to back for months on end, while some notes are quarters apart.

Over those 5 years I’ve tried a few different lightweight personal wiki solutions, but kept coming back to Vimwiki due to my excessive familiarity with Vim and the simplicity of the underlying format (plain text FTW).

I used to store my Vimwiki in Dropbox, but after Dropbox imposed a three device free tier limit, I migrated to Google Drive for all my storage needs (and haven’t looked back!). I’m able to view my notes on any platform (including previewing the HTML pages on mobile).

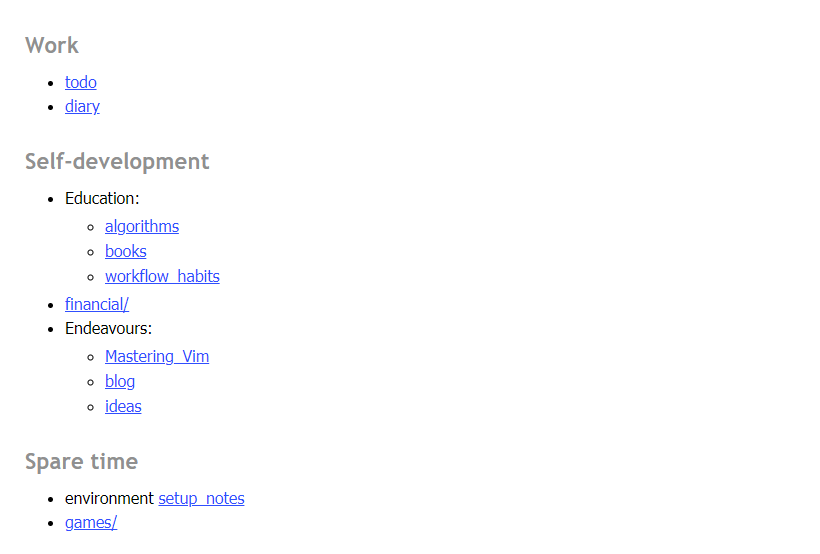

I love seeing how other people organize their Wiki homepage, so it’s only fair to share mine:

I use Vimwiki as a combination of a knowledge repository and a daily project/work journal (<Leader>wi). I love being able to interlink pages, and I find it extremely helpful to write entries journal-style, without having to think of a particular topic or a page to place my notes in.

Whenever I have a specific topic in mind, I create a page for it, or contribute to an existing page. If I don’t - I create a diary entry (<Leader>w<Leader>w), and move any developed topics into their own pages.

I use folders (I keep wanting to call them namespaces) for disconnected topics which I don’t usually connect with the rest of the wiki: like video games, financial research, and so on. I’m not sure I’m getting enough value out of namespaces though, and I might revisit using those in the future: too many files in a single directory is not a problem since I don’t interract with the files directly.

Most importantly, every once in a while I go back and revisit the organizational structure of the wiki: move pages into folders where needed (:VimwikiRenameLink makes this much less painful), add missing links for recently added but commonly mentioned topics (:VimwikiSearch helps here), and generally tidy up.

I use images liberally ({{local:images/nyan.gif|Nyan.}}), and I occasionally access the HTML version of the wiki (generated by running :VimwikiAll2HTML).

I’ve found useful to keep a running todo list with a set of things I need to accomplish for work or my projects, and I move those into corresponding diary pages once the tasks are ticked off.

At the end of each week I try to have a mini-retrospective to validate if my week was productive, and if there’s anything I can do to improve upon what I’m doing.

I also really like creating in-depth documentation on topics when researching something: the act of writing down and organizing information it helps me understand it better (that’s why, for instance, I have a beefy “financial/” folder, with a ton of research into somewhat dry, but important topics - portfolio rebalancing, health and auto insurance, home ownership, and so on).

Incoherent rambling aside, I’m hoping this post will spark some ideas about how to set up and use your own personal wiki.

Google Drive on Linux with rclone

Recently Dropbox hit me with the following announcement:

Basic users have a three device limit as of March 2019.

Being the “basic” user, and relying on Dropbox across multiple machines, I got unreasonably upset (“How dare you deny me free access to your service?!”) and started looking for a replacement.

I already store quite a lot of things in Google Drive, so it seemed like a no brainer: I migrated all my machines to Google Drive overnight. There was but only one problem: Google Drive has official clients for Windows and Mac, but there’s nothing when it comes to Linux.

I found the Internets to be surprisingly sparse on the subject, and I had to try multiple solutions and spent more time than I’d like researching options.

The best solution for me turned out to be rclone, which mounts Google Drive as a directory. It requires rclone service to be constantly running in order to access the data, which is a plus for me - I’ve accidentally killed Dropbox daemon in the past and had to deal with conflicts in my files.

Install rclone (instructions):

curl https://rclone.org/install.sh | sudo bash

From then on, rclone website some documentation when it comes to the setup. I found it somewhat difficult to parse, so here it is paraphrased:

Launch rclone config and follow the prompts:

n) New remotename> remote- Type of storage to configure:

Google Drive - Leave

client_id>andclient_secret>blank - Scope:

1 \ Full access to all files - Leave

root_folder_id>andservice_account_file>blank - Use auto config?

y - Configure this as a team drive?

n - Is this OK?

y

From here on, you can interact with your Google Drive by running rclone commands (e.g. rclone ls remote: to list top level files). But I am more interested in a continuous running service and mount is what I need:

rclone mount remote: $HOME/Drive

Now my Google Drive is accessible at ~/Drive. All that’s left is to make sure the directory is mounted on startup.

For Ubuntu/Debian, I added the following line to /etc/rc.local (before exit 0, and you need sudo access to edit the file):

rclone mount remote: $HOME/Drive

For my i3 setup, all I needed was to add the following to ~/.config/i3/config:

exec rclone mount remote: $HOME/Drive

It’s been working without an issue for a couple of weeks now - and my migration from Dropbox turned out to be somewhat painless and quick.

Sane Vim defaults (from Neovim)

Vim comes with a set of often outdated and counter-intuitive defaults. Vim has been around for around 30 years, and it only makes sense that many defaults did not age well.

Neovim addresses this issue by being shipped with many default options tweaked for modern editing experience. If you can’t or don’t want to use Neovim - I highly recommend setting some these defaults in your .vimrc:

if !has('nvim')

set nocompatible

syntax on

set autoindent

set autoread

set backspace=indent,eol,start

set belloff=all

set complete-=i

set display=lastline

set formatoptions=tcqj

set history=10000

set incsearch

set laststatus=2

set ruler

set sessionoptions-=options

set showcmd

set sidescroll=1

set smarttab

set ttimeoutlen=50

set ttyfast

set viminfo+=!

set wildmenu

endif

The defaults above enable some of the nicer editor features, like autoindent (respecting existing indentation), incsearch (search as you type), or wildmenu (enhanced command-line completion). The defaults also smooth out some historical artifacts, like unintuitive backspace behavior. Keep in mind, this breaks compatibility with some older Vim versions (but it’s unlikely to be a problem for most if not all users).